How Generative AI Telecommunications Actually Works: Inside the Technology

The telecommunications industry is undergoing a fundamental transformation as generative AI technologies move from experimental pilots to production-grade systems handling millions of customer interactions, network optimizations, and operational decisions daily. Unlike traditional rule-based automation, generative AI in telecom environments operates through sophisticated neural architectures that learn patterns from massive datasets, generate contextually relevant responses, and adapt to changing network conditions in real-time. Understanding the actual mechanisms behind these systems reveals why they represent such a significant leap forward for an industry grappling with exponential data growth, increasing service complexity, and rising customer expectations.

The mechanics of Generative AI Telecommunications deployment differ substantially from consumer-facing AI applications. Telecom implementations require specialized model architectures that can process streaming network telemetry data, maintain low-latency response times across distributed infrastructure, and operate under strict regulatory compliance requirements. At the core, these systems utilize transformer-based models fine-tuned on domain-specific datasets including historical network performance logs, customer service transcripts, technical documentation, and operational procedures. The training process involves billions of parameters being optimized to understand telecom-specific contexts, from technical jargon in trouble tickets to subtle patterns indicating impending network failures.

The Architecture Behind Generative AI Network Operations

Generative AI systems managing telecommunications networks operate through multi-layered architectures that separate data ingestion, processing, decision-making, and execution functions. The ingestion layer continuously collects structured and unstructured data from network elements, customer touchpoints, billing systems, and external sources. This data flows into preprocessing pipelines that normalize formats, handle missing values, and apply domain-specific transformations that prepare raw telemetry for model consumption. The volume is staggering—a mid-sized carrier processes terabytes of network data daily, requiring distributed processing frameworks that can handle real-time analytics at scale.

The processing layer employs specialized generative models trained for specific telecommunications tasks. For network optimization, these models analyze traffic patterns, predict congestion points, and generate configuration recommendations. For customer service, conversational AI models process natural language queries, access knowledge bases, and generate contextually appropriate responses. What makes these implementations distinct is the integration of telecom domain knowledge directly into model architectures through custom tokenization schemes, attention mechanisms tuned for time-series network data, and output layers designed to generate telecom-specific artifacts like network configurations or service provisioning scripts.

Real-Time Decision Engines

The decision-making component represents where Generative AI Telecommunications truly differentiates from other enterprise AI applications. Telecom networks cannot tolerate the latency of batch processing or cloud roundtrips for critical decisions. Modern implementations deploy edge-optimized models that run inference directly on network equipment or nearby edge computing resources. These models receive preprocessed network state information and generate decisions within milliseconds—whether to reroute traffic, adjust radio parameters, or trigger automated remediation procedures. The generative aspect proves crucial here, as these systems don't merely classify situations but generate novel configuration states optimized for current conditions that may not match historical patterns.

Behind the scenes, these decision engines maintain sophisticated state management systems that track network topology, active policies, ongoing maintenance activities, and historical context. When generating recommendations, the AI doesn't operate in isolation but considers this broader operational context. For instance, when generating a network optimization plan, the system accounts for scheduled maintenance windows, contractual service level agreements, and current capacity utilization across interconnected network segments. This contextual awareness transforms generative models from simple pattern matchers into genuinely useful operational tools.

How Generative AI Actually Processes Customer Interactions

Customer service represents one of the most visible applications of Generative AI Telecommunications, yet the underlying mechanics involve far more complexity than conversational interfaces suggest. When a customer initiates contact through voice, chat, or messaging channels, the system orchestrates multiple AI components working in concert. Speech recognition models transcribe voice inputs, natural language understanding models extract intent and entities, customer data platforms retrieve relevant account information, and generative language models formulate responses. This orchestration happens in real-time, typically within seconds, requiring carefully engineered pipelines that balance accuracy with responsiveness.

The generative models powering these interactions are fine-tuned on millions of historical customer service interactions, technical support documentation, product catalogs, and troubleshooting procedures. However, effective Telecom AI Strategies go beyond simple fine-tuning. Production systems implement retrieval-augmented generation approaches where models query real-time databases, knowledge graphs, and documentation repositories to ground their responses in current, factual information. When a customer asks about their bill or service options, the model generates a response by combining its learned language patterns with retrieved data specific to that customer's account, plan details, and available offers.

Organizations implementing these systems often partner with specialized providers to ensure robust deployment. Effective AI solution engineering addresses the unique challenges of telecommunications environments, including integration with legacy systems, compliance with data privacy regulations, and performance optimization for carrier-grade reliability. The technical implementation involves containerized microservices architectures, API gateways managing authentication and rate limiting, and monitoring systems tracking model performance metrics like response accuracy, latency percentiles, and customer satisfaction scores.

Network Optimization Mechanics: From Data to Action

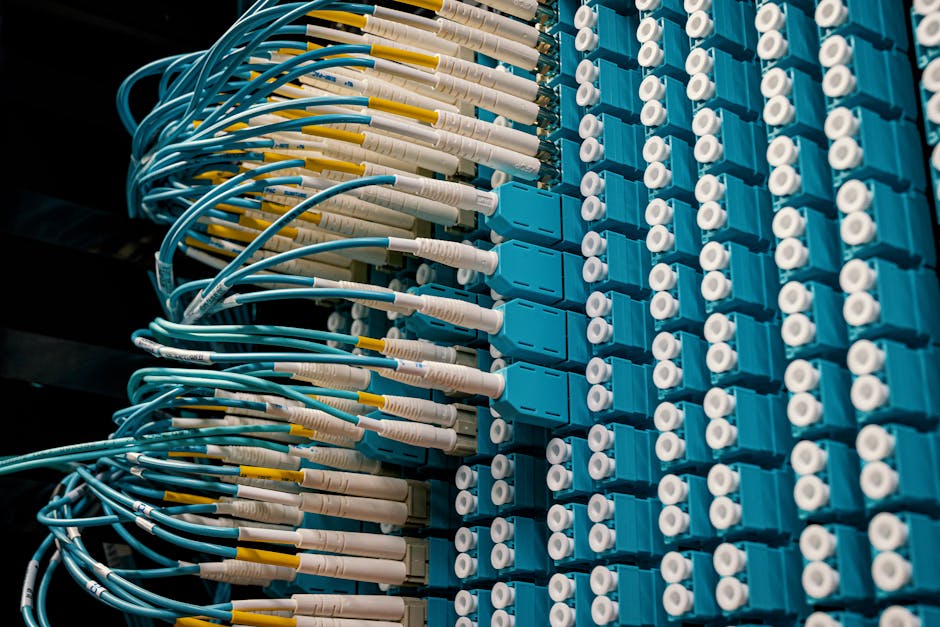

Generative AI's role in network optimization operates through continuous cycles of observation, prediction, generation, and execution. The observation phase collects granular metrics from network elements—signal strength readings from cell towers, packet loss rates from routers, bandwidth utilization across fiber links, and quality of service metrics from endpoints. This data flows into time-series databases designed to handle the write-heavy workloads characteristic of telecom networks, where thousands of metrics update every second across distributed infrastructure.

Prediction models analyze these time-series patterns to forecast future network states. Unlike traditional threshold-based alerting, generative models learn complex temporal dependencies that reveal subtle precursors to network issues. These models might identify that a specific combination of traffic patterns, environmental conditions, and equipment temperatures tends to precede service degradation events several hours in advance. This predictive capability enables proactive intervention rather than reactive troubleshooting.

Configuration Generation and Validation

When optimization opportunities are identified, generative models produce candidate configurations designed to improve network performance. This generation process accounts for multiple objectives simultaneously—maximizing throughput, minimizing latency, balancing load across resources, and maintaining service quality guarantees. The models output structured configuration artifacts in formats like JSON, YAML, or vendor-specific configuration languages, ready for deployment to network elements.

Before execution, these generated configurations undergo automated validation through simulation environments that model network behavior. Generative AI Use Cases in telecommunications increasingly include digital twin environments where proposed changes are tested against virtual replicas of production networks. Only configurations that pass validation criteria—meeting performance targets without introducing instability or violating policies—proceed to deployment. This validation loop provides crucial safety guardrails, ensuring AI-generated changes enhance rather than disrupt network operations.

The Training and Fine-Tuning Process

Creating effective generative AI models for telecommunications requires specialized training approaches that differ from general-purpose language models. The process begins with foundation models pretrained on broad corpora, then applies successive fine-tuning stages using telecom-specific datasets. Initial fine-tuning focuses on domain adaptation, exposing models to technical documentation, standards specifications, and industry terminology until they develop fluency in telecommunications concepts.

Subsequent fine-tuning stages target specific use cases. A model destined for customer service undergoes training on historical support interactions, learning to recognize common issues, effective resolution patterns, and appropriate communication styles. Models supporting network operations train on configuration files, change management logs, and incident reports, learning the relationships between network states and optimal configurations. This multi-stage approach builds specialized expertise while retaining the general reasoning capabilities of foundation models.

Continuous learning mechanisms keep deployed models current as networks evolve. Feedback loops collect data on model predictions versus actual outcomes—whether a generated customer response resolved the issue, whether a configuration change achieved its performance objectives, or whether a predicted network event materialized. This feedback informs regular retraining cycles that adapt models to changing network characteristics, new service offerings, and evolving customer needs. The infrastructure supporting this continuous learning represents a significant technical investment, requiring MLOps platforms managing model versioning, A/B testing frameworks comparing model variants, and automated retraining pipelines.

Integration with Telecommunications Infrastructure

Deploying Generative AI Telecommunications solutions requires deep integration with existing telecom infrastructure, much of which predates modern cloud-native architectures. Practical implementations bridge legacy systems through API gateways and integration middleware that translate between modern microservices and traditional telecom protocols. For instance, connecting generative AI systems to Operations Support Systems (OSS) and Business Support Systems (BSS) involves adapting to protocols like TL1, SNMP, and NETCONF while exposing modern RESTful or GraphQL interfaces to AI services.

Data governance becomes particularly complex in these integrated environments. Generative models require access to customer data, network telemetry, and operational information, yet telecommunications providers operate under strict regulatory requirements regarding data handling, retention, and privacy. Technical implementations employ privacy-preserving techniques including data anonymization, differential privacy during training, and secure enclaves for processing sensitive information. The architecture ensures AI systems can learn from comprehensive datasets while maintaining compliance with regulations like GDPR, CCPA, and telecom-specific frameworks.

Edge Deployment for Low-Latency Applications

Many Generative AI Telecommunications applications require deployment at network edges rather than centralized data centers. Use cases like real-time network optimization, fraud detection, and quality of experience monitoring cannot tolerate the latency of backhauling data to central processing locations. Edge deployments utilize optimized model formats—quantization, pruning, and knowledge distillation techniques that compress models to run on resource-constrained edge hardware while preserving accuracy.

These edge AI systems operate with intermittent connectivity to central management platforms, requiring robust local decision-making capabilities and eventual consistency mechanisms for synchronizing state. When edge nodes detect situations requiring immediate action, they generate and execute responses autonomously, then report outcomes to central systems for monitoring and learning purposes. This distributed architecture enables Generative AI Telecommunications to scale across vast geographic footprints while maintaining the responsiveness critical for carrier-grade operations.

Conclusion

Understanding how Generative AI Telecommunications actually works reveals a sophisticated technical ecosystem far removed from simple chatbot implementations. These systems orchestrate multiple specialized AI models, integrate deeply with telecom infrastructure, operate under strict latency and reliability requirements, and continuously learn from operational feedback. The mechanics involve custom model architectures, domain-specific training procedures, privacy-preserving data handling, and distributed deployment patterns tailored to telecommunications environments. For organizations embarking on this transformation, comprehensive AI Implementation Roadmaps provide structured approaches to navigating the technical complexity, aligning stakeholders around realistic timelines, and building the specialized capabilities required for successful deployment. As these technologies mature, the telecommunications industry is developing genuine expertise in operationalizing generative AI at scale, establishing patterns and practices that will define carrier operations for the coming decade.

Comments

Post a Comment